Zigguart - Quart accelerator written in Zig

Introduction

I run a finance web app built with Quart, the async-native counterpart to Flask. I chose Python for a practical reason: finance is my main domain, and most of the people around me understand Python. Very few of them work comfortably at the systems level, so in many cases I still need to stay grounded in Python.

At the same time, I have been building tools that let me push more of the heavy finance data work down into C. One of those projects is a Pandas-compatible replacement aimed at keeping more of the pipeline at the C level, so I can avoid climbing back up the stack unless I actually need Python. But that's a story for a different article.

In this post I will provide a map layout of how I built a Quart accelerator: Zigguart, where it enables a Python web framework to have massive throughput capacity.

Moving Quart Off the Hot Path

I think the easiest way to misunderstand Zigguart is to treat it as one more attempt to make a Python web stack a little faster. That description catches the surface of the project, but it misses the real point.

Zigguart is interesting because it changes where the work happens. Instead of assuming that every request has to become a normal Quart request, it asks a more useful question: which requests actually need Quart (and Python) at all?

That question matters because ordinary performance tuning has a pretty low ceiling. You can make JSON a bit faster, reduce copies, improve pooling, and clean up middleware ordering, all of which helps. Even after all that, the basic shape of the request is still the same. The server accepts the connection, Python owns the request lifecycle, the framework builds its abstractions, and the application runs. A better version of the usual path is still the usual path.

Zigguart is trying to break that assumption. In a standard Quart deployment, the picture is straightforward:

Internet -> Granian -> Quart

With Zigguart Mode B, it becomes:

Internet -> Zigguart -> Quart

That is the single change at the architectural level: Zigguart moves in front of Quart and becomes the server that sees the request first. Inside Zigguart, the flow is more detailed. A request can be rejected early, served from the Zig cache, or passed through the embedded Python bridge into Quart. The important point here is simpler than the internal mechanics. Quart stops being the thing that has to own every request from the beginning.

The Central Idea

The most accurate short description: Zigguart moves Quart off the hot path without giving up Quart. That is the real ambition: it keeps Quart where Quart is useful and tries to erase Quart where Quart has become repetitive overhead, while not forcing a rewrite to another framework.

This is what makes the project more interesting than generic "Python, but faster" marketing. A lot of performance work in web applications still lives inside the assumption that every request must become an application request. In practice, many high-traffic routes are stable enough that rebuilding the whole framework path on every hit is simply wasteful. If a response can be replayed safely, the fastest place to do that is in front of the framework, not inside it.

That is the real design move. Zigguart puts a native layer in the only place where order-of-magnitude wins are even available: before the Python framework wakes up. Once you do that, repeated requests stop being opportunities for micro-optimization and start becoming candidates for elimination.

Why The Warm Path Gets So Fast

The big headline numbers only make sense if you interpret them correctly. When Zigguart produces a large warm-route speedup, that does not mean template rendering became magically cheap or that database access suddenly improved by two orders of magnitude. It means the route is no longer taking the normal application path on repeated hits.

That is an important difference. On a real cache hit, Zigguart can skip the parts of the stack that usually dominate Python web serving:

- ASGI request handling

- Quart request construction

- framework routing

- Python scheduling

- GIL acquisition

- Python response construction

At that point the unit of work changes. The system is no longer "running the web app again." It is replaying a cached response from native memory. The server looks up the bytes, assigns the headers and body, and writes them to the socket. That is why the warm-path numbers can become so dramatic without being dishonest.

The project is not claiming that Python itself has become absurdly fast. It is showing that repeated traffic can often avoid Python entirely.

This is also why Zigguart feels more like an accelerator than a typical server. A normal high-performance ASGI server is still excellent at serving Python requests. Zigguart is strongest when it can prevent a request from needing to become a Python request at all ~ avoiding any processes at Python level if possible.

If you pay attention, I keep on repeating the point on avoiding any processes / operation. This is a simple design decision where people often forget: don't process if it's not needed, don't try to be "sophisticated" by adding unnecessary complexity to your design. Return to the first principles of design.

What Actually Makes The Design Strong

The first major strength is obvious once you look at the control flow. Zigguart gives the earliest decision to Zig. That matters more than any isolated micro-optimization, because the earlier you decide, the more work you can avoid. If Zig were still sitting behind Quart, the ceiling would stay modest. Moving the native layer in front of Quart is what gives the design its headroom.

The second strength is the cache itself. cache.zig is not just a storage mechanism. It is part of the execution model. The cache is in-process, Zig-owned, and consulted before the Python fallback path. A hot hit is served from the native server path, not from a Python callback that happens to fetch something cached. That distinction is easy to miss, and it is the reason the project cannot be reduced to "the app has caching now."

The third strength is that Zigguart is not transport-only. It has app-facing acceleration concepts because the goal is not merely to parse HTTP quickly. It tries to understand when an application route can be short-circuited safely. That is why route classification, @simple_json, @cached_route, and the promotion rules matter. They are attempts to define where application semantics can be preserved while the framework cost is avoided.

The fourth strength is narrower but still important: the project is very clearly Quart-first. That focus gives the work a real identity. Zigguart is not trying to be the universal answer for every Python async framework on day one. It is trying to make an existing Quart app fast without forcing a rewrite. That is a practical goal, and it is one reason the project feels more grounded than many performance experiments.

The fifth strength is easier to overlook because it does not show up in a flashy benchmark graph. Zigguart has spent real effort on correctness. Trusted proxy handling, cache-safety restrictions, request bounds, overload shedding, and shutdown behavior are all part of the story. Once Zig sits in front of the app, the server becomes part of the trust boundary. At that point, sloppiness turns a fast-path demo into a liability. Zigguart has mostly taken the harder route and treated the front layer like real infrastructure.

The Core Components

One reason the project holds together conceptually is that the Mode B pieces have clear jobs. server.zig owns process startup, worker lifecycle, shutdown, and the main runtime. httpz gives the project a Zig-native HTTP/1.1 substrate without burying it in a full framework. cache.zig provides the warm-path engine. python_embed.zig keeps CPython and the fallback event loop alive inside the process. asgi_bridge.zig turns a miss into a real ASGI call and extracts the response back into the native side.

That last file is where the tradeoff becomes visible. It is the compatibility layer, and it is also the place where cold traffic still pays a lot. The bridge has to build request state, cross into Python, wait for the framework to run, and then bring the result back out. That is why the project is strongest when it can keep traffic away from this path.

I think the most elegant part of the design lives in python_embed.zig.

The code literally inverts the usual relationship between Python and the server. Python stops being the server and becomes a service living inside the server. That is more than an implementation detail. It is the architectural statement that makes the rest of Zigguart coherent.

What The Benchmark Numbers Really Say

The benchmark numbers only become meaningful once they are separated into warm and cold behavior. Warm traffic is where Zigguart proves the architecture. Repeated routes that can be cached and replayed are where the project becomes convincing. That is why the review numbers can show strong gains on routes like summary, market_page, commodity_specific_oil, and, on some paths, much larger wins still.

The clearest data point is the latest warm Quart_FA comparison:

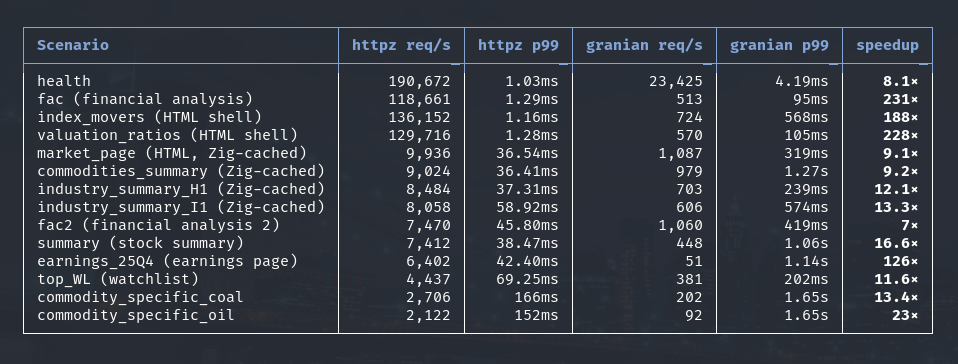

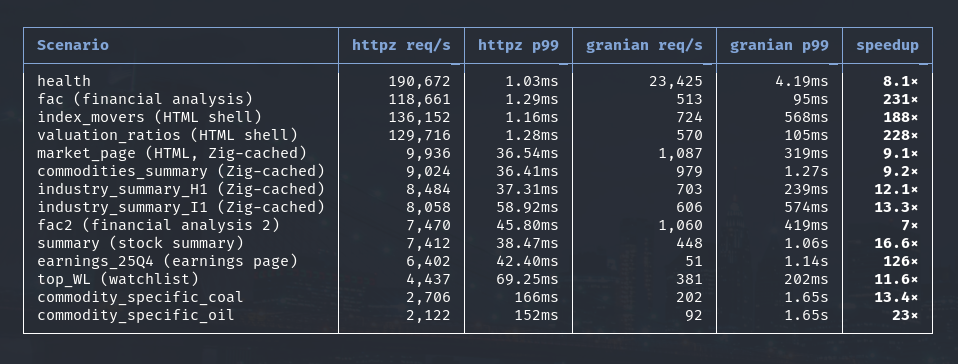

Latest results — zigguart-httpz vs zigguart-granian (warm traffic)

benchmark/results/20260321-105626 · wrk 4 threads · 64 connections · 10 s · Linux x86-64

These are real Quart_FA production routes: HTML pages, DB-backed stock summaries, commodity pages, and earnings tables. Those are not JSON response where usually is used by most web framework benchmarks. The only JSON response from the bench was the health endpoint where it consists of 10 lines of nested JSON. The rest are full HTML pages.

The key point is that both sides of this benchmark already use the same Zigguart cache:

zigguart-httpz: Zigguart cache +http.zigserver pathzigguart-granian: Zigguart cache + Granian server path

This is not "cached Zigguart" versus "uncached Granian", both above combo received the same zig-cache benefit. Warm means the Zig cache is already populated, Python has already run once for that URL, and subsequent requests are served from cached responses. The remaining difference comes from the server path itself. Even after both sides get the same Zig-cache benefit, the standalone Zig server still serves those cached responses orders of magnitudes faster than the Granian-backed path.

While those results are real, they also need to be described honestly: a 100x result does not mean Quart became 100x faster. It means the repeated request no longer travels through Quart in the ordinary way. The server has moved the work to a different place, and on the hot path that change dominates everything else.

The spread in the numbers is also informative. Smaller warm-route multipliers usually correspond to heavier bodies or routes that still have meaningful transport and replay cost even after caching. Very large multipliers show up when the old stack keeps paying Python costs for a route that Zigguart has reduced to a native cache hit. The numbers vary because the route shapes vary. That is exactly what you would expect from this design.

This is also the right place to mention the part that can sound like magic if it is not explained properly. The "Zig-cache magic" is not some mysterious extra optimization layer. It is simply the consequence of where the cache lives and who owns it. cache.zig sits in front of the fallback path, inside the server, and stores replayable responses as native server data.

On a warm hit, Zigguart is not "using Python more efficiently." It is skipping Python and replaying the response from Zig. That is the mechanism behind the benchmark table above.

Cold traffic tells a different story. A cold miss is expensive as it still has to pay for the bridge, for Python scheduling, for response extraction, and for whatever work the application itself does on that miss. On Quart_FA, that application-side cost is not small. Startup warmers, CSV loading, SQL fan-out, pandas transformations, and template rendering all pile on top of the server’s own fallback work. That is why the project looks much less dominant on cold misses than on warm hits. It is not a contradiction. It is the natural limit of the current architecture.

Where Zigguart Fits Among Existing Servers

Zigguart is closest in spirit to Granian. That is the most useful comparison, because Granian also moves serving work out of the traditional Python stack and tries to make the transport layer efficient enough that the framework stops dominating every request. Uvicorn and Hypercorn are still useful reference points, but they occupy a different role. They are broader, more mature, general-purpose ASGI servers.

The distinction matters because Zigguart is not trying to win by breadth. It is trying to win by changing the hot path.

Uvicorn and Hypercorn want to be reliable servers for Python apps in general. Granian wants to be a very fast server for Python apps. Zigguart is trying to become a server where the hot path often stops being a Python application request at all.

That gives it a narrower identity, but also a more distinctive one. The fairest way to describe Zigguart at this stage is probably this: it is an early-stage, Quart-first, Granian-like server with an additional application-acceleration layer. That is a better description than calling it a drop-in general-purpose replacement for every mature ASGI server, because it tells you what the project is actually optimizing for.

Why Quart Matters

The Quart focus is more than branding. It shapes the whole project. In a lot of performance conversations, the implicit answer is that if you care enough, you should move to a different framework. Sometimes that is the right answer. In other cases, the app already exists, the framework fits the product, and the real problem sits lower in the stack.

Zigguart comes from that second situation. It assumes the Quart app is worth keeping and that the serving model is the part that should change. That is why the project feels more practical than a full framework rewrite. It also explains why it has app-facing concepts instead of staying purely transport-shaped. The project is not chasing abstract server speed in a vacuum. It is trying to keep Quart where Quart is valuable and remove Quart where it has become repetitive machinery.

The Redis Question

The obvious objection is that this all sounds like a more complicated way of describing a cache, and that Redis already exists. Redis is excellent at shared storage, cross-worker reuse, persistence across restarts, and centralized invalidation. A Quart app can absolutely benefit from Redis.

What Redis does not do is change who serves the request. A Redis-backed Quart app still moves through the normal Python server path before the cached value becomes useful. Zigguart solves a different problem. Its cache sits directly in the serving path, in native memory, in front of Python. A hot hit can be served before the framework is involved at all.

That is why Redis is better understood as a possible complement, not a replacement. If Zigguart ever wanted a second-level shared cache, Redis would be a sensible L2. Replacing cache.zig with Redis entirely would weaken the most valuable part of the project, because the hot path would stop being a pure in-process native response path.

If you ask "why not just use Redis?", you're asking the wrong question. The useful question is whether it is valuable to move repeated traffic off the Python request path completely. Zigguart is built around the idea that the answer is yes ~ avoiding any processes in the Python level.

Where The Project Is Still Weak

The weak spot is cold traffic, and that needs to be stated plainly. The architecture shines when it can avoid the fallback path. It is much less impressive when it has to take the fallback path and the application itself is heavy on a miss.

That does not mean the architecture is wrong. It means the project is still living with the cost of compatibility. The fallback bridge is real work. The app behind it is also real work. In Quart_FA, that means cold summary requests are carrying startup contention, data loading, SQL work, dataframe processing, and template rendering on top of the bridge cost.

So the honest reading is simple. Warm traffic already proves the core idea. Cold traffic shows where the unfinished engineering remains. That is not a bad place for the project to be, but it does make the next priorities clear.

Why The Architecture Is Still Worth Building

Even with the cold-path weakness, I still think the architecture is worth pursuing because it is pointed at the right problem. The biggest wins in serving usually come from moving responsibility to the right place in the stack. Zigguart has done that. By putting the native layer in front of the framework, it has given itself a chance at very large wins that would simply never appear inside the ordinary Python request path.

It also avoids the ridiculousness of "rewrite the app in X language" as the only way forward. The project keeps Quart for the complex path and strips Quart out of the repetitive path. That is a much more attractive migration story than telling a working application to change its framework for the sake of the server.

At this stage I would not describe Zigguart as the final form of a general purpose Python async server. I would describe it as a serious Quart-first server and accelerator that has already proved one very important point: moving the serving decision in front of the Python framework changes the economics of a real web app in a meaningful way.

Conclusion

Zigguart core components are written in Zig, a low level systems program language aiming to be "a better C". If you follow my posts then you'd know I love writing C programs, so much that I modified my C version to use safe_c.h and then built cgrep and cforge with it.

Plenty of projects use a faster language around Python, but I feel Zig is special as it has 1:1 structure to C and it's easier to get to a lower level than C. What makes Zigguart unique is that it gives Quart a different serving model while still letting the application remain a Quart application.

I think this is the real architectural contribution: it changes how repeated traffic is served, it explains the warm-path benchmark numbers cleanly, and it points to a alternative method where the framework is used where it adds value instead of where habit placed it. By habit I mean: the default old pattern where every request automatically goes through the Python framework.

The project is not finished. The cold path still needs real work. The maturity gap with broader servers is still there. Even so, the central idea has already proved itself. Zigguart shows that a Quart app does not have to live forever inside the full Python request path, and that is a strong enough result to make the project worth continuing.

Comments section here

If you enjoyed this post, click the little up arrow chevron on the bottom left of the page to help it rank in Bear's Discovery feed and if you got any questions or anything, please use the comments section.